Key Takeaway

Risk Mitigation

Learn how to test new strategies on small segments (1-5%) to prevent large-scale defaults

Implementation

A step-by-step guide to setting up the "A/B Testing" pattern using the DecisionRules template

Flexibility

Discover how to apply testing logic both outside of rules (flow control) and inside specific decision tables

Deploying unverified credit risk strategies is a gamble no financial institution should take. In this guide, you will learn how to implement robust A/B testing (Champion/Challenger) patterns directly in your decision engine. By the end of this article, you will be able to run compliant, data-driven experiments to optimize your portfolio without waiting for IT release cycles.

Why Traditional Risk Management Fails at Speed

In the traditional world of hard-coded banking systems, testing a new credit score model is often a bureaucratic nightmare. Risk managers define a new policy, but then face a "black box" implementation process:

- The "IT Bottleneck: You request a change, but the deployment cycle takes weeks or months.

- The "Big Bang" Risk: Without granular testing capabilities, you are forced to deploy changes to 100% of the traffic, risking a sudden spike in default rates.

- Blind Spots: You lack the real-time feedback loop to know if your new "Challenger" model is actually outperforming the existing "Champion."

The ability to run fast, transparent A/B tests is not just a luxury; it is a survival mechanism for modern lending because it delivers:

- Risk Mitigation: Stop guessing. Prevent exposure to flawed models by isolating them to a micro-segment (e.g., 5% of applicants) before full rollout.

- Profitability: Identify the exact credit score cut-off that maximizes acceptance without increasing bad debt.

- Auditability: Every test is a versioned, traceable artifact. This satisfies strict model governance and compliance requirements (e.g., OCC, Basel).

Business Rule Engines (BRE) like DecisionRules solve this by decoupling the logic from the application code, allowing you to switch strategies instantly. But without a structured pattern, even a BRE can become messy. Here is how to do it right.

Seamless A/B Testing with DecisionRules: Your Risk Strategy Upgrade

DecisionRules transforms the A/B testing landscape for risk management, making it accessible, agile, and robust. Our pre-built "A/B Testing" template empowers business users to:

- Rapid Deployment: Instantly set up and activate multiple test groups (e.g., Champion vs. Challenger) without IT involvement.

- Total Control: Configure test group assignments based on dynamic inputs directly within DecisionRules, ensuring stability and consistency.

- Unmatched Flexibility: Apply test groups at any decisioning layer – whether it's routing entire application paths (e.g., to different scorecards) or dynamically adjusting parameters within a single rule.

The intuitive DecisionRules A/B Testing template provides a clear overview, allowing business analysts to visually manage and configure test groups. This eliminates the need for complex coding, making sophisticated risk experiments a reality for everyone.

Implementing A/B Testing: A Step-by-Step Guide

Implementing a robust A/B testing framework in DecisionRules involves three key components: Test Group Definition, Pseudo-Random Number Generation, and Decision Flow Orchestration.

1. Defining Your Test Groups

DecisionRules provides a dedicated "A/B Testing" template designed for precise test group allocation. You can find this template within the Financial Services section of "Templates and Examples" in the DecisionRules app.

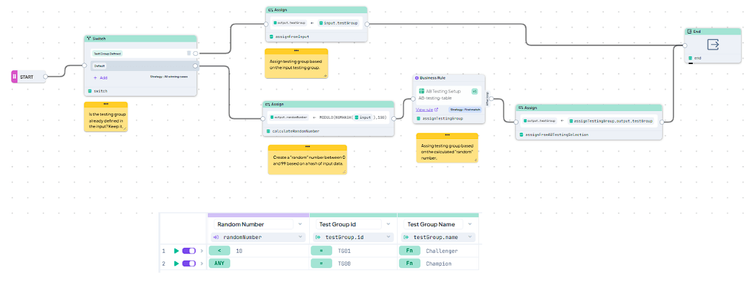

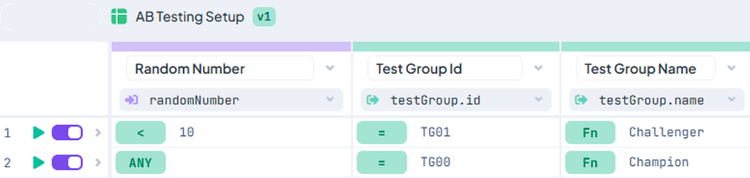

The core of your test group setup is an "AB Testing Setup" Decision Table. Here, you easily define the percentage allocation for each test group by specifying a range (between 0 and 99) for a pseudo-random number. This allows for granular control, such as assigning a "Challenger" strategy to exactly 10% of your applications.

Example of setup in AB Testing Setup Decision Table where a Challenger strategy is applied to 10% of the applications. Notice how simply adjusting the range allocates a specific percentage of traffic to the Challenger group.

2. Generating a Stable Pseudo-Random Number

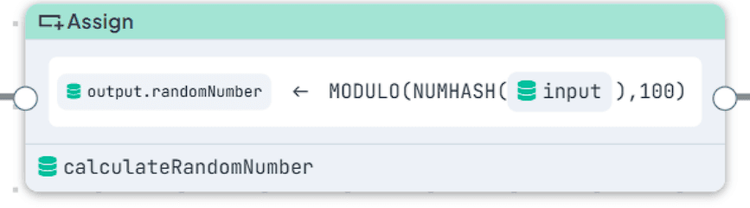

For consistent and stable test group assignments, a pseudo-random number is generated based on a hash of your input data. This ensures that the same input (e.g., `applicationId`, `clientId`, `session`, `cookies`) consistently falls into the same test group. This "sticky session" behavior is crucial for accurate experiment results.

Generating a pseudo-random number. The hash function ensures that a given input combination always produces the same output, guaranteeing stable test group assignment across multiple calls for the same applicant or session.

3. Orchestrating with a Decision Flow

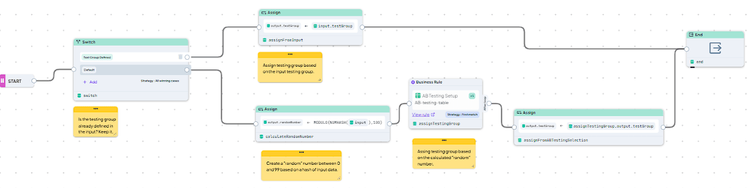

The entire A/B testing logic is orchestrated using a Decision Flow named "AB Testing". This flow intelligently handles the assignment process:

- External Override: It first checks if a test group has already been provided by the calling system, allowing for external control.

- Dynamic Generation: If no external group is defined, it generates the pseudo-random number based on the configured inputs.

- Group Assignment: Finally, it evaluates the "AB Testing Setup" table to determine and assign the correct Test Group Name and ID.

The complete "AB Testing" Decision Flow. This visual orchestration clearly shows the steps from input to final test group assignment, making the process transparent and auditable.

Advanced Design Notes

- Multiple Test Groups: You can easily set up multiple dependent or independent test groups using additional setup tables or extending the existing one.

- Capacity Control: For scenarios requiring control over the total number of applications or portfolio size in each group, you can add an input parameter to track counts and conditionally switch to a default group if a limit is exceeded.

Applying A/B Testing to Your Decisions

Once the test group is assigned by the "AB Testing" Decision Flow, you integrate this `testGroup` variable into your core decision logic. There are two primary patterns for application:

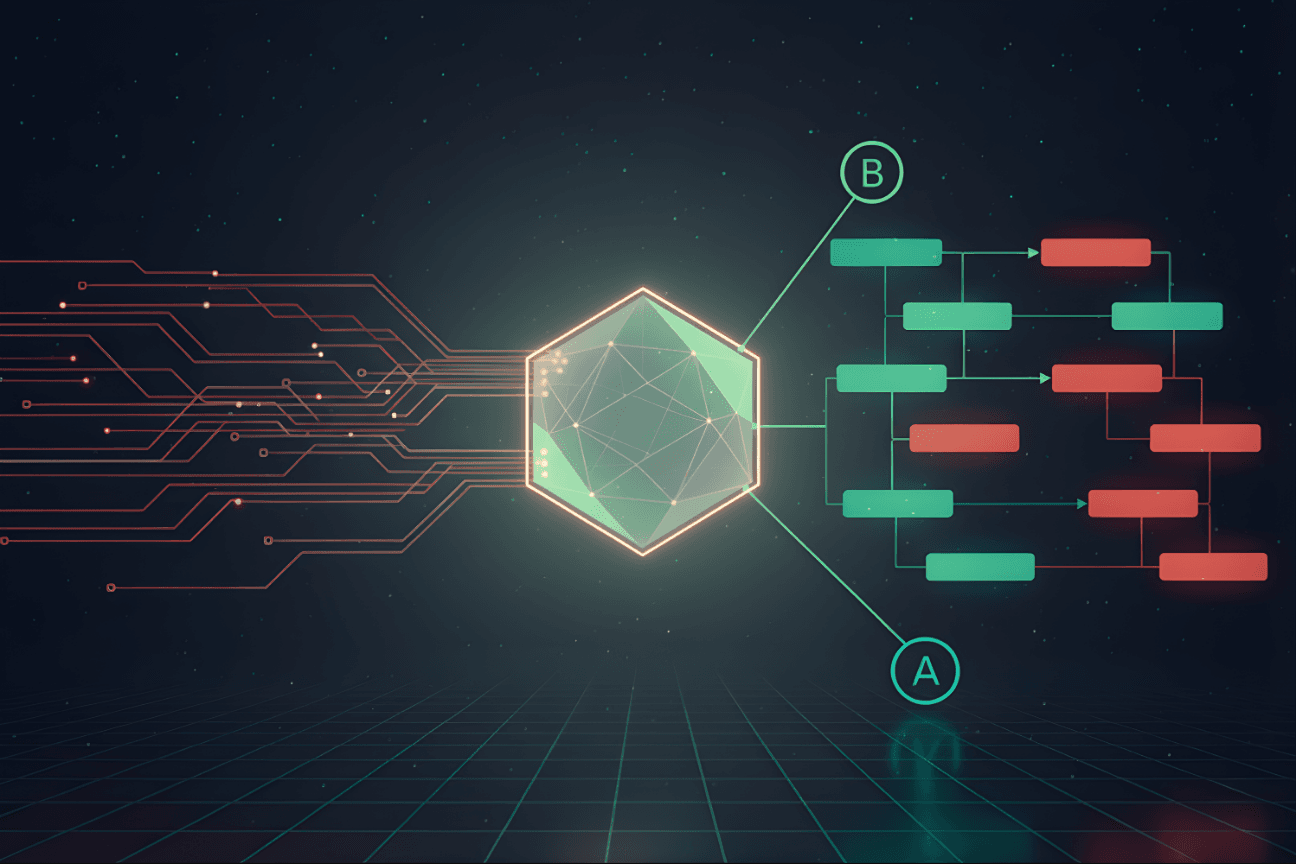

Outside of Rules (Decision Flow Control)

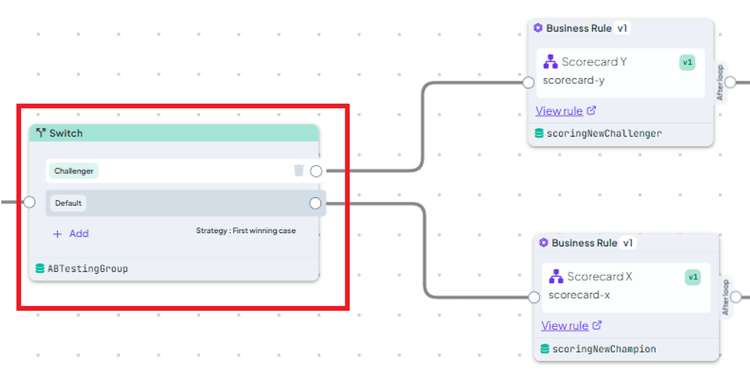

You can use the assigned `testGroup` within another Decision Flow to select entirely different rule sets or process paths. This is ideal for major strategy shifts, like routing traffic to a completely new scorecard.

Application of a Test Group in a Decision Flow to select a scorecard. Based on the assigned test group, the system dynamically selects which scorecard (e.g., "Champion Scorecard" or "Challenger Scorecard") to execute.

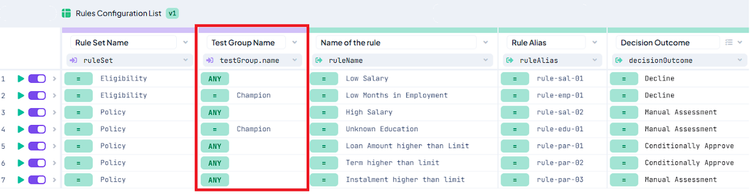

Alternatively, a configuration Decision Table can map `testGroup` to specific rules or decision paths, offering a centralized way to manage your decision routing.

Alternatively, a configuration Decision Table can map `testGroup` to specific rules or decision paths, offering a centralized way to manage your decision routing.

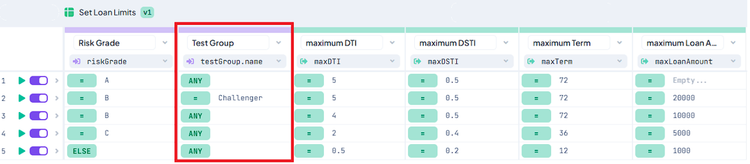

Inside Rules (Parameter Modification)

For more granular control, the `testGroup` variable can be used *inside* a Decision Table or Decision Tree to modify specific decision parameters or outcomes. For example, a "Challenger" group might receive different loan limits or interest rates directly within the same rule set.

Application of a Test Group in a Decision Table setting loan limits. Here, the `testGroup` directly influences a specific parameter (e.g., `loanLimit`), allowing for fine-tuned experimentation within a single rule.

Crucial Logging: Always ensure the assigned Test Group Name and ID are logged as part of your decision outputs. For enhanced analytics, consider logging additional details, such as the specific rules executed within each test path.

Advanced Design Notes

Champion/Challenger Shadowing: To compare both the Champion and Challenger outcomes simultaneously, you can execute your decisions twice—once for each group—and capture both results in your output. This "shadow mode" is invaluable for detailed analysis without impacting live decisions.

Elevate Your Decision-Making: The Competitive Edge of A/B Testing

The A/B Testing pattern in DecisionRules is more than a technical workflow; it's a strategic imperative. It empowers financial institutions to move beyond guesswork and achieve **data-driven decision optimization**. By systematically testing and validating your risk strategies, you:

- Mitigate Risk: Reduce exposure to unproven models.

- Boost Profitability: Continuously refine thresholds for maximum acceptance and minimal defaults.

- Ensure Compliance: Maintain an auditable, transparent record of all strategy changes.

- Gain Agility: React swiftly to market shifts and competitive pressures.

Give your risk team the power to innovate and adapt. Turn weeks of development into hours of experimentation, transforming your decision-making process into a continuous cycle of improvement.

About the Author: Karel Svec is a Solution Consultant at DecisionRules with over 19 years of experience helping businesses manage their decisioning logic and improve efficiency. He specializes in solutions for credit decisioning, risk management and other financial use cases.

Karel Švec

Business Analyst